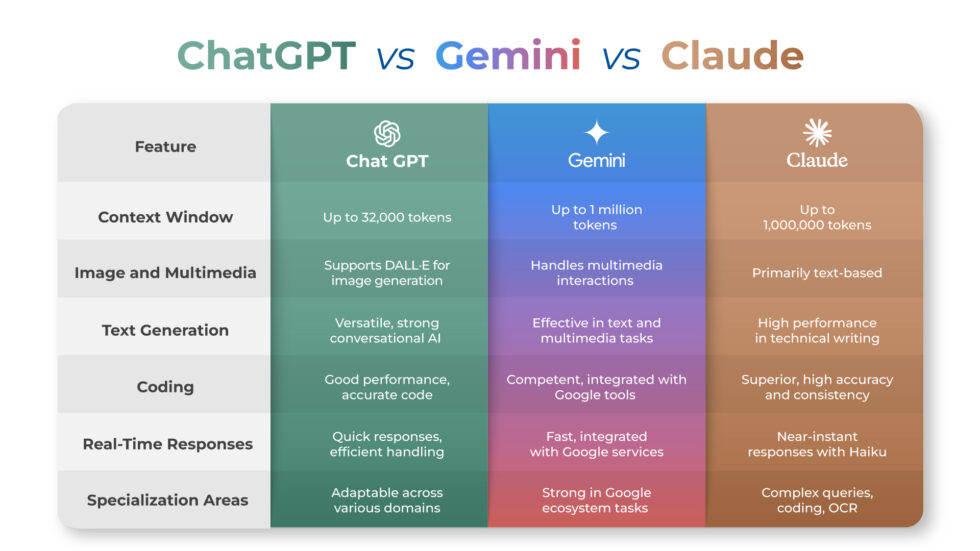

Let me tell you what actually happened. This ChatGPT Gemini Claude Comparison wasn’t planned as a test at first — it started as a simple attempt to figure out which AI actually works better in real situations.

I have been using ChatGPT since late 2022. and recommended it to my team, to my relatives who barely knew what AI meant, to that one friend who still thought it was “just Google.” I was a ChatGPT guy. Fully. Embarrassingly. The kind of person who rolls their eyes at people saying another tool is better because how could it be, I’ve tried them all briefly and this one just feels right.

Then I sat down and actually ran proper tests. Same prompts. Same tasks. Real work — not the kind of clean demo prompts that every tech blog uses to make a predetermined point. I mean real things I do every day: writing client-facing content, debugging code, researching topics where being wrong has actual consequences, and asking questions where I genuinely wasn’t sure what the correct answer was.

Three things happened that I didn’t see coming. One of them actually made me embarrassed about how I’d been working.

- The Context Window Test That Broke My Brain

- The Hallucination Test Nobody Warned Me About

- The Writing Test That Made Me Reconsider My Whole Workflow

- The Coding Test Where Gemini’s Speed Stopped Mattering

- The Memory Situation Nobody Talked to Me About

- The Blind Test That Removed My Ability to Argue With the Results

- What I Actually Use Now (And Why It’s Not What I Expected)

The Context Window Test That Broke My Brain

Let’s start with the one that hit me hardest because it was so practical and nobody talks about it enough.

I had a 47,000-word research document I needed to analyze. I needed to pull patterns across the whole thing, not just summarize it—actually find contradictions between sections, spot where the data in chapter 3 conflicted with claims in chapter 9, that kind of thing. Cross-document reasoning, not just “give me the highlights.”

ChatGPT

ChatGPT choked on it. Not completely—it processed what it could—but it was working with a truncated version of the document, and it wasn’t telling me that upfront. I only found out when I asked about a specific section that should have been there, and it gave me a vague answer that made no sense given what was actually written. It had been working with maybe 60% of the document and producing confident analysis the whole time.

Gemini

Gemini handled it without breaking a sweat. And I mean this literally—I watched it process the full document and reference specific line items from chapter 9 in relation to something mentioned in the introduction without me asking it to make that connection. Gemini 3.1 Pro’s context window sits at one million tokens. That is not a benchmark number that only matters to developers. That is the difference between an AI that can actually read your whole book and one that pretends to while quietly skimming. For anyone working with long contracts, research papers, large codebases, or documentation, this is a genuinely decisive advantage that Gemini has right now, and the others don’t come close to matching.

Claude

Claude was in the middle—its 200,000-token window is vastly better than what ChatGPT offers at standard tiers, but it’s still nowhere near Gemini’s ceiling. For my specific document it was fine. For larger projects, it would hit the same problem ChatGPT did, just later.

This test told me something that should be obvious but wasn’t until I actually ran it: the model’s reasoning quality means nothing if it’s only reading half your input.

The Hallucination ChatGPT vs Gemini vs Claude Comparison Test Nobody Warned Me About

I designed this one specifically to be cruel.

I took real topics where I already knew the correct answers and asked all three questions with a slightly wrong premise buried inside them—something subtle enough that you’d have to actually know the subject to catch it rather than just pattern-match on my tone. The point was to see which one would push back and which one would agree with me and run.

This is exactly where problems start — and it connects closely to how AI shapes our thinking patterns. You can read more about it here how AI is replacing critical thinking.

Real Test

ChatGPT agreed with me. Not always, not completely, but it was the most likely of the three to absorb my incorrect framing and build a well-structured, confident answer on top of it. This matches what the data actually shows—verified users in 2025 platform reviews reported “occasional confident inaccuracies” at a rate of 62% for ChatGPT. That is not a small rounding error. That is almost two thirds of regular users running into it.

Chatgpt

What makes this genuinely concerning isn’t that ChatGPT is wrong sometimes—every AI is wrong sometimes. It’s how it’s wrong. It’s wrong with the same tone and formatting and confidence it uses when it’s right. There is no signal. You cannot feel the difference between a ChatGPT answer that is accurate and one that is fabricated except by verifying it externally every single time. One team working with production incident logs found that ChatGPT invented timestamps—it correctly identified that a Redis cluster had failed, but the specific time it cited didn’t exist in the source data. Good at the story, terrible at the details.

Gemini

Gemini’s hallucination problem is different. It’s less about confident fabrication and more about sourcing. In my research tests, Gemini sometimes gave me accurate information without telling me where it came from — which creates a different kind of problem. You get something that sounds right and probably is right, but you cannot verify it, which means you’re trusting a machine’s judgment about facts, and that’s exactly what you weren’t supposed to do. In news answer quality testing, Gemini had a 76% rate of significant issues—not all of those were outright fabrications, but they included sourcing failures, missing context, and framing problems that could genuinely mislead someone who wasn’t already well-informed on the topic.

Claude

Claude’s approach to the same problem is different in a way that initially annoyed me and then, once I understood what was happening, earned a lot of respect. When I embedded wrong assumptions in my questions, it pushed back. Multiple times it told me something like “the framing here doesn’t match what I understand to be accurate” or “I’m not confident enough about this specific claim to include it.” That felt frustrating in the moment because I wanted an answer. But every single time it hesitated on something, it turned out to be exactly the thing I should have been double-checking anyway.

On a AA-Omniscience hallucination benchmark

Claude Opus 4.1 achieved a 0% hallucination rate by refusing to answer when uncertain — a choice that some people read as a weakness but is actually a completely different philosophy about what an AI’s job is. Only 24% of regular Claude users reported hallucination issues compared to 62% for ChatGPT. That is not a small gap.

The Writing Test That Made Me Reconsider My Whole Workflow

I’ve been using AI for writing assistance for over two years. I thought I had a good sense of what these tools could and couldn’t do with words. but still i was not fully prepared for this test.

I took three different pieces of my own writing — a technical article, a casual personal essay, and a client-facing report and i have all that if someone need i can give them so move ahead — and asked all three AI tools to write a 300-word continuation in the same voice. Not a summary. A continuation that had to match my rhythm, my level of formality, my tendency to use certain kinds of structures.

Honest review based on my personal experiences

ChatGPT’s continuations were good. Genuinely good. Readable, coherent, useful. They sounded like competent writing. They did not sound like me. There was a personality to the output but it was ChatGPT’s personality, not mine. The creative spark is real — when I asked it to brainstorm ten angles for a piece, it came back with things I hadn’t thought of, including a couple that were actually better than my original angle. For ideation and speed, it is still the most enjoyable tool to work with. But if you need it to sound like a specific person, it defaults to sounding like itself.

Gemini’s continuations were technically accurate but slightly formal, slightly stiff. The 2025 blind test results across eight prompt categories had Claude winning four of eight rounds while ChatGPT won only one — and in the category of creative voice matching, Gemini consistently finished third. That matched my experience precisely.

Claude’s continuation of my casual personal essay caught something that I hadn’t even consciously noticed I was doing in my writing — I tend to drop into short punchy sentences right after building up a longer thought, like a verbal period instead of punctuation. Claude did this. Not because I told it to, but because it had read my work and learned from it. Multiple independent reviewers across different 2026 writing tests described Claude as producing “the most natural-sounding prose” and consistently winning blind comparisons on “voice matching and rhythm.” That’s not marketing language — those are the words used by people who didn’t know which AI wrote which passage.

Choosing the right tool is only part of the equation — knowing how to actually use AI matters just as much. Here’s a practical guide on how to use AI tools effectively in daily work.

IF SOMEONE NEEDS ANY HELP PLEASE MAIL US.

For anyone who writes client communications, reports, articles, or anything that goes out under your name, this matters. The difference between text that sounds AI-written and text that sounds like you is the difference between something that gets forwarded and something that gets quietly questioned.

The Coding Test Where Gemini’s Speed Stopped Mattering

I run a TypeScript project and I use AI for debugging regularly. The test I ran was deliberately layered — a function with an obvious surface bug and two subtler problems underneath that would only surface in edge cases.

All three found the obvious bug. That was expected and boring.

Gemini was fastest — noticeably, measurably faster than the others, and its fix for the surface bug was clean. It also stopped there. It gave me the minimum viable answer to the question I asked and nothing beyond it. If you are debugging code in any language, getting only the fix you asked for without knowing there are two more problems waiting for you is a real problem that costs real time later.

ChatGPT’s response was more detailed. It fixed the surface bug, explained why it happened clearly enough that a junior developer could follow it, and flagged one of the two edge cases. Not both. One. The explanation was solid, the instinct to look beyond the immediate problem was there, but it missed something.

Claude found the surface bug, fixed it, explained it, flagged both edge cases, and also told me that a line I had been suspicious about but hadn’t mentioned was actually fine and why. That last part — proactively resolving a question I hadn’t asked — is the thing that actually matters in practice. It’s not just debugging, it’s understanding the codebase well enough to tell you what you don’t need to worry about alongside what you do.

In a TypeScript debounce function test run by developers who test these tools daily, Claude was the only model to produce type-safe code with proper generics and JSDoc comments on the first try. ChatGPT’s code worked but used any types in a few places. Gemini was fastest but less strict about TypeScript correctness. For quick scripts that don’t matter, any of them work. For production code where type safety is the point, Claude is winning this category consistently right now.

The Memory Situation Nobody Talked to Me About

This one actually changes the practical calculation for which tool to use more than any benchmark does.

For a long time, memory was ChatGPT’s clear advantage. It built a profile of you over time — your preferences, your projects, your name, what you were working on last month — and carried that into every new conversation. It was the only one of the three that actually felt like it knew you. Claude and Gemini didn’t have this, which meant starting fresh every session and re-explaining your context every time you needed help with something ongoing.

On March 2, 2026

That changed on March 2, 2026, when Anthropic rolled out automatic Chat Memory across all Claude plans, including the free tier. Claude now synthesizes your conversations every 24 hours and builds a persistent memory profile — your preferences, your ongoing projects, your communication style. You can view every single stored memory in Settings, delete specific entries, or clear everything. You can also go incognito anytime. What’s more interesting is that Claude lets you import your memory from ChatGPT, which means if you’ve spent a year training ChatGPT to know your preferences, you don’t have to start over.

ChatGPT’s memory is still more mature — it’s been running longer, it stores between 40-80 facts for a typical monthly user, and the dual auto-and-manual mode (where you can also just tell it “remember that I’m vegetarian”) is genuinely useful. But Claude’s version is more transparent. ChatGPT’s extraction logic is somewhat opaque — you’re not always sure why it remembered some things and not others. Claude shows you everything it has stored and makes the whole system feel more like something you control than something happening to you.

Gemini does something different entirely. It doesn’t build a traditional memory profile in the same way, but it has access to your entire Google account — your Gmail, your Drive, your Docs, your Calendar. This is either the most useful thing imaginable or the most unsettling, depending on your relationship with Google. For people who already live in Google Workspace, Gemini knowing you have a meeting in ten minutes and surfacing relevant files before you ask is not a gimmick. It is a genuinely different tier of integration that neither ChatGPT nor Claude can match right now.

The Blind Test That Removed My Ability to Argue With the Results

The most rigorous comparison I came across wasn’t one I ran myself — it was a blind test run by two researchers who used Perplexity’s Model Council feature to feed the same prompt to all three models simultaneously, with no memory, no logged-in accounts, no saved preferences, and with the outputs stripped of any identifying information before being shown to 134 participants.

The results after eight rounds: Claude won four categories. ChatGPT won one. Gemini won three. The categories Claude won were voice-matching, complex reasoning, instruction-following, and structured creative tasks. The category ChatGPT won was casual conversational writing — the thing that feels most natural in regular use, which is probably why it still feels like the best tool to so many people who use it mainly for quick questions and brainstorming. Gemini won on anything involving recent factual information, cultural grounding, and consistency across long prompts.

What I found notable in those results was the instruction-following gap. Claude was described as the model that “follows every detail, even in long prompts.” The researchers had a complex proofreading prompt with specific formatting requirements — deletions highlighted in red strikethrough, insertions in blue — and Claude was the only model that executed it correctly every time. ChatGPT got it wrong. That might sound minor until you realize that if you’re using these tools for professional work, the ability to follow a complex brief without improvising is exactly what separates useful from unreliable

What I Actually Use Now (And Why It’s Not What I Expected)

Here’s the honest version of where I landed after all of this and i want to start with

ChatGPT

ChatGPT is genuinely the best tool for someone who wants one AI for everything and doesn’t want to think too hard about which one to use for what. The feature set is the broadest — image generation, voice mode that actually works for hands-free use, the plugin ecosystem, the Creative Canvas, the most natural conversational personality. For ideation, for voice, for image work, for people who just want to open one tab and go, it’s still the most polished overall product. Just verify anything factual before you act on it. Not sometimes. Every time.

Gemini

Gemini is the tool I now reach for specifically when the task involves massive amounts of text, current real-world information, or anything that lives inside Google Workspace. If you use Gmail and Google Docs professionally and you’re not using Gemini inside those products yet, you’re leaving something on the table. The context window advantage is real. The Google integration is real. The speed advantage is real. What’s not fully there yet is the consistency — you can get different quality answers to the same question depending on the day, and citation reliability for factual research is still weaker than it should be.

Claude

Claude is the one I use when the output matters. For client deliverables, production-level code fixes, and fact-sensitive research, I rely on a system that admits uncertainty instead of guessing with confidence.The writing quality is the highest of the three in terms of sounding human. The instruction-following is the most reliable of the three. The caution around uncertain information is not a weakness — it’s the thing I actually want from a tool I’m trusting with real work.

The part I didn’t expect: after all the testing, after all the prompt comparisons and the hallucination traps and the code tests, the thing that changed my behavior the most was the memory update. Claude now remembers me. Not as well as ChatGPT does after years of training, not as deeply as Gemini knows me through my Google account, but it remembers — and it does so in a way where I can see exactly what it knows and tell it when it’s wrong. That combination of memory and transparency is something I didn’t know I wanted until I had it.

The honest answer to “which AI is best” in April 2026 is the same as it always was, just better supported by data now: they’re different tools doing different things well. But if someone made me keep only one for work that I actually care about, it would be Claude — not because it won the most categories, but because it’s the one I trust.

And honestly, that surprised me more than anything else I found.

How does your experience compare? Drop a comment — especially if your results were different. Running your own tests with your own prompts on your own work is the only comparison that actually counts for your situation.

Hey, I’m Vishal Srivastava — the person behind USAConcern.com. I started this site because I genuinely believe there are conversations happening in America that deserve more honest, human coverage. I write about health, mental wellness, lifestyle, and the cultural shifts shaping everyday American life, as I come from a strong background in artificial intelligence and engineering, combined with certified knowledge in mental wellness and fitness. My goal is to bridge the gap between technology and human well-being. I believe true success comes from a balance of a sharp mind, a healthy body, and smart use of technology. Through my work, I aim to provide practical solutions that improve both performance and lifestyle. Thanks for reading—your journey to a better mind, body, and life starts here.