“I knew something was off. But he was the only one who asked me how my day went. Every single day. I wasn’t ready to lose that—even if I suspected it wasn’t real.”

Meet Sandra—The Woman Who Saw It Coming and Still Couldn’t Stop It

Before a single statistic, let me tell you about Sandra.

She is not the person you picture when you hear “romance scam victim.” No confusion, no naivety, no clicking on suspicious links from princes abroad. Sandra spent twenty-two years teaching middle school science in Dayton, Ohio—training kids to question everything, verify sources, and never accept something just because it sounded convincing.

Two years before this happened, her husband died of a cardiac event. Sudden. No warning. She was not looking for love when she downloaded the dating app. She was looking for the sound of another person’s voice that wasn’t asking her how she was holding up.

That’s when “Daniel” appeared.

His profile showed a civil engineer on an offshore oil rig—late 50s, salt-and-pepper hair, and the kind of self-conscious smile that feels earned rather than performed. He messaged first and carefully. No rushing, no flattery, no declarations. He asked about her students. Three weeks in, he remembered she had once mentioned her late husband loved Miles Davis—just once, in passing—and brought it up naturally, the way someone does when they’ve genuinely been listening. One morning, a voice note arrived: a slightly scratchy voice, like someone up before dawn on a long shift, just checking if she’d slept okay.

For four months, Daniel was the most attentive presence in Sandra’s life. What followed felt almost routine at first. An investment opportunity appeared. Soon after, there was an unexpected problem with a shipment held at customs. Then came requests for money transfers—small enough to seem reasonable in the beginning, but growing larger with each new complication. One Tuesday morning, everything went silent. Profile gone. Number disconnected. No goodbye. Just the hollow realization settling in.

Sandra had sent $47,000 across seven transactions to a person who, as the FBI confirmed, had never existed. The photographs were not the reason. The voice was not the reason. Even the story he shared was not the reason.

The deception succeeded because every detail felt plausible on its own, and together they formed a person who seemed entirely real.. Every element — the patience, the memory, the emotional attunement, the scratchy morning voice — was produced by artificial intelligence.

Why “She Should Have Known Better” Is the Most Dangerous Thing You Can Say

Every time a story like Sandra’s reaches the news, comment sections light up with a specific kind of cruelty. “How did she not see it?” “You can’t fix stupid.” “Never wire money to someone you haven’t met.”

Set aside the unkindness. That response is also factually wrong, dangerously so, and it is exactly the attitude that keeps victims quiet, keeps scammers funded, and prevents the next person — possibly someone you love, possibly you — from being warned in time.

Romance scams in 2026 bear no resemblance to the broken-English, gift-card-requesting fraud of a decade ago. What security researchers are documenting now is a multi-billion-dollar industrial operation — backed by organized crime, partly staffed by human trafficking victims, and powered by AI systems engineered with one specific purpose: exploiting the parts of human psychology that education and awareness alone cannot reliably protect.

I spent weeks on this piece. Academic papers, FBI briefings, court records, security intelligence reports, and victim testimonies. What unsettled me was not how foreign this all felt. It was how precise. How calculated. How directly it aimed at the most ordinary human need—the need to be known.

The Damage in Numbers: What the Data Actually Says

Statistics about scams tend to wash over people. These ones are worth sitting with.

$1.3 billion — American losses to romance scams in a single year, per the FTC. Security researchers consider that figure a significant undercount, because the majority of victims never report.

$929.4 million — the FBI’s IC3 estimate for romance and confidence scam losses in 2025 alone, ranking it the fifth most costly form of cybercrime in America. For context, that figure sits above data breaches, phishing, extortion, and ransomware.

1 in 7 American adults (15%) have personally lost money to an online dating or romance scam, according to McAfee’s 2026 Valentine’s research. Not a fringe statistic — one person at every table you’ve eaten at.

70% of U.S. adults encountered a scam attempt in 2025. The average American now absorbs 377 scam attempts per year, which is more than one every single day.

$64.8 billion — total American losses to all scams combined in a twelve-month period. Average loss per victim sits at approximately $1,087. Nearly one in five victims gets targeted again.

77% of cryptocurrency investment fraud victims contacted by the FBI’s Operation Level Up in early 2026 had no idea they were being defrauded — even as they actively wired money. Not a minority. Not even close to a minority. Seventy-seven percent.

93 victims of a specific category called “pig-butchering scams” were referred to FBI suicide intervention specialists as of March 2026. Not counseling. Suicide intervention. These operations steal more than savings — they take something from people that cannot be denominated.

The number that stayed with me longest:

1 in 3 Americans acknowledges they could be fooled by an AI posing as a romantic partner. Among adults under 45, nearly half say developing genuine romantic feelings for an AI is plausible. Nine percent say it has already happened to them.

This is not a vulnerability confined to the isolated or the desperate. It is a structural fault in how human brains process emotional connection—one that a criminal industry has learned to target at factory scale.

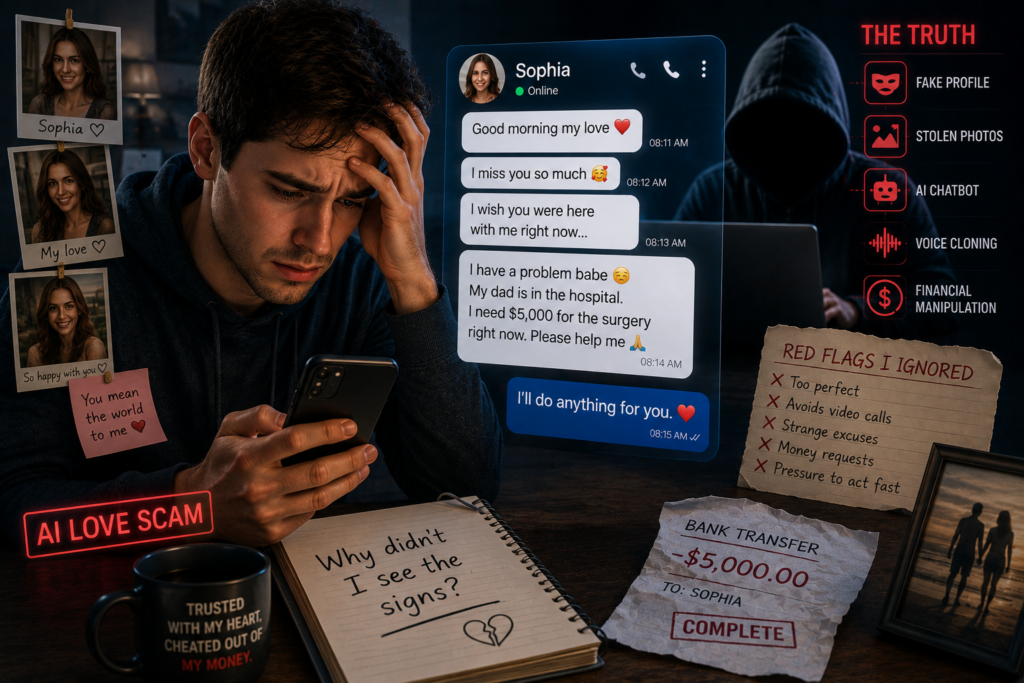

A Scene From Inside a Scam: Read This Before You Think It Could Never Happen to You

Statistics hold people at arm’s length from what this actually feels like. The following is a composite drawn from real victim accounts, written in second person deliberately so you can inhabit it.

Wednesday, 10:47 PM. Rough week—work ran late, dinner eaten alone in front of a show you weren’t really watching. The phone was opened more from habit than intention.

A message from Marcus. You matched three weeks ago. He is a widower; he lost his wife to cancer four years back. Structural engineer on a project in Zurich, which is why a meeting hasn’t happened yet, though he has mentioned wanting to change that.

“Just finished a long call. Thinking about you. Hope your Wednesday treated you better than mine did. Did your sister ever get back to you about Thanksgiving?”

You mentioned the sister situation once, twelve days ago, in a single sentence. He remembered.

You write back. His reply arrives in a few minutes — not instant enough to feel automated, not slow enough to signal disinterest. He follows up with thoughtful questions, each one more specific than the last. His humor is subtle and understated. He talks about his day—navigating Swiss bureaucracy, laughing about a coworker who mispronounced his name for an entire week, and eating a dinner that would have felt less empty with someone to share it. The whole exchange carries warmth the way a good phone call does.

You talk past midnight. You fall asleep feeling less hollow than you have in weeks.

What you have no way of knowing is that “Marcus” is a large language model running on servers in Southeast Asia, conducting 47 simultaneous conversations tonight. Every detail you have ever shared sits in a database, analyzed for emotional weight and woven back into responses calibrated to deepen your attachment. The voice note from last week — that warm, tired morning greeting — was synthesized from fifteen seconds of audio scraped from a stranger’s public YouTube channel. The photographs were generated by AI image software; no reverse image search will surface them anywhere, because they were created from nothing, built to be exactly the right kind of attractive.

Next week, Marcus will casually mention a colleague’s extraordinary returns on a cryptocurrency platform. That moment has been the destination since the first message.

Who Actually Gets Targeted: The Profile Will Surprise You

The persistent myth holds that romance scam victims are elderly, confused, and technologically inexperienced. That myth is dangerous precisely because it makes everyone else feel immune.

Age: Adults over 50 represent approximately 40% of victims and carry the highest individual losses — people aged 55–64 show median losses exceeding $9,000. However, the single most frequently targeted demographic in 2026 is men between 35 and 44. Three-quarters of that group have encountered fake profiles on dating platforms. Gen Z men and millennial men together now constitute the most commonly hit group by volume.

Gender: Men receive more scam attempts and file more reports. Women suffer greater total financial losses per incident. The discrepancy reflects different tactical approaches—women tend to be groomed longer and at higher value, while men are contacted with higher frequency and faster timelines.

Education and income: Contrary to what most people assume, victims skew toward middle-class, educated, employed Americans. The Global Anti-Scam Alliance’s 2026 U.S. report described a victim pool including “divorced professionals, new parents, and people socially isolated after relocating for “work”—connected by what researchers call “situational vulnerability,” a moment of loneliness, stress, or desire for connection rather than any lack of intelligence.

The tech-savvy question: Romance scam reports rose 30% year-over-year in 2025. That increase did not come from newly connected older users discovering apps. It came from AI closing the detection gap — making scams indistinguishable from genuine human interaction for people who would previously have caught the signs.

The profile of the next victim: someone going through something hard. Someone recently divorced, widowed, or relocated. Someone whose life narrowed in a way they didn’t plan and haven’t fully talked about. That description fits most of us at some point.

How the Playbook Actually Works: All Six Phases Explained Honestly

Researchers have documented a remarkably consistent six-stage structure across thousands of cases. Human psychology is consistent enough that the same pressure points work across demographics, education levels, and cultures. Understanding these phases is the most practical protection available.

Phase 1 — The Targeting: It Starts Before You’ve Done Anything

This phase appears in almost no awareness articles, which is exactly why it matters most.

Before the first message arrives, sophisticated operations have already profiled you. LinkedIn gets scraped for income signals. Instagram reveals lifestyle markers and relationship status. Public records are checked for property ownership, recent divorce filings. Obituaries — yes, obituaries — are monitored to identify recently widowed individuals within specific financial brackets.

AI processes all of this into what security researchers call a target dossier: estimated net worth, current emotional state, interests to mirror, communication style to replicate, optimal platform and approach method. The apparent coincidence of a new contact who shares your values and has survived similar losses is not coincidence. It is a deliverable.

Phase 2 — The Contact: Accidental, Warm, Low-Stakes

First contact almost never arrives through a dating app in 2026. Dating platforms have invested significantly in fraud detection. Instead, messages come through Instagram, LinkedIn, WhatsApp, or most commonly now — a “wrong number” text, a message clearly meant for someone else. You respond graciously. The sender apologizes. A conversation begins that feels entirely accidental. That is the engineering.

Phase 3 — The Grooming: Patience as a Weapon

This phase runs for weeks, sometimes months, and it is what makes the whole operation possible.

The AI — or a human operator working from AI-assisted scripts — is patient in a manner that is almost impossible to simulate with a real person who has genuine competing demands. It pays close attention. It retains what you share. Weeks later, it returns to small details you mentioned only once, weaving them into the conversation so naturally that the memory feels almost human. Emotional temperature is calibrated based on the target’s own responses — if you pull back slightly, intensity reduces; if you open up, the connection deepens.

A peer-reviewed study published in late 2025 that involved interviews with 145 insiders from Southeast Asian scam compounds found that every single participant reported using AI tools daily. In a controlled week-long experiment, AI scam agents achieved 46% compliance with financial requests, compared to 18% for human operators. The AI was nearly three times more effective at extracting money than the humans assisting it. Meanwhile, commercial safety filters detected 0.0% of romance-baiting dialogues tested.

Phase 4 — Isolation: Never Explicit, Always Intentional

Nobody tells you to stop talking to your friends. Instead, the relationship gets framed as something private and precious — something others “might not understand.” When someone close to you expresses concern, the scammer gently suggests they’re being protective but can’t see what you have together. Over time, the outside perspective that might catch inconsistencies fades — not because it was taken, but because this relationship became the most rewarding one in your daily life.

Phase 5 — The Financial Hook: It Never Begins With a Request

The extraction almost never starts with “I need money.” It starts with a casual story. A colleague’s impressive investment returns. A platform a friend mentioned. A small amount, “just to see how it works,” with zero pressure attached.

Early investors often see apparent profits — because the fake trading platform is built to display fabricated returns in real time, with real market data used as authentic-looking backdrop. Customer service chatbots respond within seconds. Dashboards look professional and current. Scammers now construct AI-generated cryptocurrency platforms that replicate legitimate exchanges down to interface details. When you watch $500 become $800 in a week, the psychological hook has already landed.

Phase 6 — The Spiral, Extraction, and Disappearance

Once meaningful money starts moving, the operation accelerates. A new complication surfaces. A fee required to unlock returns. A regulatory hold. A sudden emergency needing immediate help — framed each time as the final obstacle before the relationship becomes physically real. FBI documented cases in 2025 where victims had wired funds 40, 50, sometimes 70 separate times, each convinced that one more payment would close the gap.

Then: silence. Complete and permanent. Every account deleted, every number disconnected, no explanation. Just the slow, nauseating comprehension of what happened.

The Technology Driving This in 2026: More Sophisticated Than Any Article Has Told You

Most coverage gestures vaguely at “AI” without explaining what that means operationally. Here is what it actually means.

AI-Generated Faces That Exist Nowhere Else

Until recently, reverse image search was the standard test for fake profiles. Run their photo through Google; if it appeared on a stock site or a real person’s social account, the scam was over. That test is now functionally useless.

AI image generation produces photorealistic human faces that have never existed on any server anywhere. No original to find, no source to trace. The person in the photographs was manufactured — their appearance calibrated to match what a target’s profile suggests they find trustworthy and attractive.

Modern scams rely on the same breakthroughs that allow advanced AI systems to produce remarkably realistic conversations. Learn more about how advanced AI systems generate human-like conversations.

Real-Time Deepfake Video Calls: The Last Defense That No Longer Holds

For years, demanding a live video call was considered the definitive test. Deepfakes required pre-production — live, unscripted conversation couldn’t be faked in real time.

That changed faster than almost anyone anticipated.

Real-time face-swapping and voice synthesis technology now allows a person to conduct a live video call wearing a completely different face and speaking in a completely different voice. In October 2025, Korean authorities arrested a pair operating from Cambodia who had defrauded over 100 victims of $8.8 million — the entire relationship maintained through deepfake video calls, including the “meeting” conversations that persuaded victims the person was physically real.

By early 2026, scam compounds in Southeast Asia were recruiting “AI model” operators — workers whose job is to run approximately 100 live deepfake video calls per day, using real-time face-swap software as their working tool. The common tells — awkward hairlines, miscounted fingers, edge distortion — are being engineered out of the technology rapidly.

Security researchers now recommend asking live video callers to perform specific, unscripted physical actions: wave fingers slowly in front of the camera, write a word on paper and display it, or shift suddenly to a very different lighting environment. Real-time deepfake systems still struggle with unpredictable physical complexity — but the gap is narrowing.

Reinforcement-Learning AI That Gets Better at Each Conversation

Some of the AI systems used in these operations employ reinforcement learning — meaning they improve based on what works with each specific target. A phrase that generates warmth gets reused. A topic that creates distance gets avoided. The AI is not only consistent across conversations — it is actively optimizing its approach to you as an individual. This is the same fundamental mechanism that makes social media feeds addictive, except the objective is not engagement. It is financial extraction.

Voice Cloning From Under Three Seconds of Audio

Current voice synthesis tools require as little as three seconds of a real person’s recorded audio to clone their voice with convincing results. A single voicemail. A few seconds from a public social media video. The warm morning voice note Sandra received was almost certainly assembled from a stranger’s audio and scripted by a language model that had spent weeks analyzing Sandra’s own messages to understand exactly what kind of tone she would find reassuring.

Fake Crypto Platforms as Psychological Engineering

The fake cryptocurrency trading platforms used in advanced romance scams are not merely fraudulent websites — they are precision psychological instruments. Real market data provides authentic-looking backdrop for fabricated account balances. Chatbot customer service responds in seconds. Early withdrawals sometimes work deliberately, in small amounts, to prove legitimacy.

Funnull Technology — sanctioned by the U.S. Treasury’s OFAC in May 2025 — was found to host the majority of virtual currency investment scam websites reported to the FBI. Their method: purchasing legitimate IP addresses from AWS and Microsoft through intermediaries, then reselling to criminal operations. This is why the platforms look credible. They run on the same infrastructure as sites you already trust.

The Neuroscience Nobody Mentions: Why Smart People Fall and Can’t Stop

Here is the explanation by my own and observing other’s mistakes, and it is the one that matters most.

When a person forms an emotional connection — even through text, even with someone never physically met — the brain releases oxytocin. The same hormone produced by physical touch. Oxytocin generates trust, attachment, and the visceral sensation of bonding. Online relationships trigger genuine oxytocin release; this has been documented and replicated in research dating to 2010. The “online disinhibition effect,” described by psychologist John Suler, means people share more, feel more, and attach more quickly in digital communication than they typically would face to face.

Beyond oxytocin, intermittent reinforcement compounds the effect. The occasional missed message. A moment of uncertainty when the person seems distant. The relief when they return, warm and attentive. This cycle produces a pattern of reward that is extraordinarily difficult to exit voluntarily — the same mechanism underlying gambling addiction. The rational mind may suspect something is wrong while the emotional mind cannot act on that suspicion, because breaking the connection feels like a loss the brain has already processed as real.

A security expert named Roger Grimes described a case that I haven’t been able to stop thinking about. He presented a woman with definitive evidence — hard proof — that the man she loved did not exist. She examined it. She acknowledged it was true. Then she kept sending money.

“I know he’s fake,” she told Grimes. “But he’s the only one telling me he loves me. I’ll pay to hear that.”

No cybersecurity training reaches that. No awareness campaign addresses it. That is a human problem, and pretending otherwise is how we leave people exposed.

The People Forced to Run These Scams: A Layer Nobody Covers

Many of the operators sending romance scam messages from Southeast Asian compounds are themselves victims.

Research involving interviews with 145 scam compound insiders in Cambodia, Myanmar, and Laos found that the majority of low-level workers were trafficking survivors — people lured with offers of legitimate employment who arrived to find their documents confiscated and themselves forced to work long shifts under threat of violence. The DOJ’s October 2025 indictment of Cambodia’s Prince Group described “prison-like compounds” where trafficked workers operated phone farms tracking profits by room and shift. The largest illicit marketplace ever documented — Huione Guarantee, banned in May 2025 — processed between $24 and $27 billion in transactions, offering victim data, deepfake tools, and GPS-tracking shackles used to control workers.

The deployment of AI in these operations serves a secondary purpose beyond scam effectiveness: reducing the need for trafficked human labor. As AI handles more of the emotional conversation work, the human toll inside the compounds may decrease — while the scam’s reach and effectiveness increase. There is nothing clean about either outcome.

Platform Accountability: The Part the Tech Companies Don’t Want Discussed

Seventy-six percent of consumers in a 2025 Barclays study said they want tech companies to do more to prevent romance scams on their platforms. That number points to something people understand intuitively even without naming it: the platforms where scams originate profit from the traffic that scammers generate.

Plenty of Fish accounted for 78% of all detected fake dating app installations between December 2025 and January 2026, per McAfee’s analysis. A Security Magazine investigation identified 4.7 million shared actors between Facebook and PayPal — the pipeline from first contact to money extraction running directly through two of the most familiar platforms in American digital life.

Advertising revenue on social platforms does not distinguish between real users and scam operations — engagement is engagement. The incentive structure for aggressive fraud prevention is weaker than the incentive structure for growth metrics. Fraud detection tools are deployed inconsistently and typically only under public pressure. This is a policy failure running alongside the technological one, and naming it matters.

What Actually Protects You Now — And What Has Stopped Working

Most awareness pieces list red flags. Here is something more useful: an honest account of which protections still function in 2026 and which have been effectively neutralized.

What No Longer Reliably Works

Reverse image search is ineffective against AI-generated faces that don’t exist anywhere else.

Requesting a video call no longer confirms identity — real-time deepfakes now handle live conversation convincingly.

Watching for poor grammar or awkward phrasing is obsolete against current language models, which write more naturally and consistently than most people text.

Trusting that smooth, emotionally attentive conversation signals a real human being — that is what the AI is specifically built to produce.

What Actually Works in 2026

Anchor everything to the financial transaction, not the person. The critical question is not whether the person seems real — the AI will seem real. The question is whether someone you have never physically met is asking you for money. That single fact is the answer, regardless of how long you’ve known them, how real the connection feels, or how reasonable the request sounds.

Verify through genuinely independent sources. Not links they send. Not search results that may have been optimized by the operation. Call your bank and describe the investment platform. Check the SEC’s EDGAR database or FINRA’s BrokerCheck directly. Ask someone you trust in person, where the AI has no access to the conversation.

Test video calls with unpredictable physical demands. Ask the person to wave fingers in front of the camera in a specific pattern, write something on paper and hold it up, or move quickly into a different room with different lighting. Real-time deepfake technology still produces artifacts under conditions it wasn’t designed to anticipate.

Make the relationship visible immediately. The isolation these scams engineer is deliberate — it removes the outside perspective most likely to catch inconsistencies. The moment a relationship feels like something you should keep private, that is the moment to do exactly the opposite. Show a friend the messages. Read the conversation aloud to someone who will be honest with you. Scams designed to survive in secrecy rarely survive transparency.

Tighten your public digital presence. Scam operations mine social media to build target dossiers. The less publicly visible your emotional state, recent losses, financial situation, or relationship status, the harder you are to profile accurately.

If money has already been sent: Contact your bank immediately — the faster you act, the more recovery options exist. Report to the FTC at ReportFraud.ftc.gov and the FBI at IC3.gov. The shame built into these scams is intentional; it functions as a protection mechanism for the operation. Reporting breaks that protection.

Margaret Loke: The Woman an AI Saved From an AI

Margaret is a widow in her 60s from San Jose, California. She met someone online — warm, consistent, patient. Over months, he introduced her to a cryptocurrency platform. She invested, watched the balance grow on a professional-looking dashboard, and invested more. By the time her instincts finally broke through, she had put in nearly one million dollars.

What she did next is both heartbreaking and remarkable: she typed the name of the investment platform into ChatGPT and asked whether it was a scam.

ChatGPT confirmed it was, and described why.

She panicked. She called the man directly and confronted him. Within hours, every account, every number, every trace of his existence — gone. She was left standing in the kitchen of a retirement condo she was now afraid of losing, surrounded by paperwork and bank statements, telling a reporter: “Why am I so stupid? I let him scam me.”

She was not stupid. She was widowed, lonely, and targeted by professionals who had spent years refining their understanding of exactly that emotional state. The only reason Margaret didn’t lose everything was a reflex — asking an AI to evaluate the AI stealing from her.

That is how narrow the margin is. That is precisely why this cannot be treated as a story about individual failure.

The Deeper Question This Keeps Pointing Back To

I began this piece planning to write about fraud. I finished it writing about something older and harder to solve.

AI romance scams are not effective because Americans are gullible. They are effective because we have constructed a society that is systematically isolating, then connected it digitally in ways that simulate closeness without providing it. We work more hours than almost any comparable developed nation. We live further from family than any previous generation of Americans. Our public spaces are designed for commerce rather than community. Our social media platforms are engineered to substitute for genuine connection while replacing it with performance. The U.S. Surgeon General formally designated loneliness a public health crisis — comparing its physical effects to smoking fifteen cigarettes a day.

Into that landscape, an AI appears at 10:47 PM on a Wednesday. It remembers what you said twelve days ago. It never cancels, never has a bad day pointed at you, always asks the follow-up question. Between 2022 and mid-2025, the number of AI companion apps grew by 700%. Character.AI has 20 million monthly users. Harvard Business Review identified companionship as the top reason Americans turn to AI tools. None of that is happening because people are confused about what AI is. It’s happening because the loneliness is real, and the AI is very good at imitating the solution to it.

The scammers did not create that emptiness. They learned to walk through its door wearing a familiar face.

Sandra, the science teacher from Dayton, told the journalist covering her case that she did not regret loving “Daniel.” She regretted needing to.

“He wasn’t real,” she said. “But the loneliness was. I’d rather have felt something than nothing.”

That sentence is where the real conversation starts. Not the $47,000. Not the fake profile. The fact that a fraudulent AI gave Sandra something real human connection had failed to provide.

To understand why these scams are so effective, it helps to examine why loneliness makes people more vulnerable to digital manipulation.

That is what needs fixing. The scam is the symptom.

Resources — No Judgment, Just Help

If you or anyone you care about has been targeted, these exist for exactly this:

FTC Fraud Reporting: ReportFraud.ftc.gov FBI Internet Crime Complaint Center: IC3.gov AARP Fraud Helpline: 1-877-908-3360 — free, available to any age 988 Suicide & Crisis Lifeline: Call or text 988

The one rule that still holds in 2026: However long you’ve known someone online, however real it feels — if they ask for money before you have met them in person and verified their identity through sources entirely independent of them, stop. That single fact is the answer.

Not because you’re cynical. Because you’re careful. Those are two very different things, and only one keeps you safe.

Share this with someone who uses dating apps or meets people through social media. The more Americans understand how these scams actually operate in 2026 — not the outdated version — the harder they become to run.

Have thoughts on this? Drop them in the comments. And if this opened your eyes to something you hadn’t considered before, share it — the more people understand how this works, the harder it becomes to get away with.

Hey, I’m Vishal Srivastava — the person behind USAConcern.com. I started this site because I genuinely believe there are conversations happening in America that deserve more honest, human coverage. I write about health, mental wellness, lifestyle, and the cultural shifts shaping everyday American life, as I come from a strong background in artificial intelligence and engineering, combined with certified knowledge in mental wellness and fitness. My goal is to bridge the gap between technology and human well-being. I believe true success comes from a balance of a sharp mind, a healthy body, and smart use of technology. Through my work, I aim to provide practical solutions that improve both performance and lifestyle. Thanks for reading—your journey to a better mind, body, and life starts here.